feat: allow to discover runner statuses#1268

Conversation

11c632f to

1e6e23b

Compare

mumoshu

left a comment

mumoshu

left a comment

There was a problem hiding this comment.

Sorry for the delayed review!

LGTM overall, but it would be great if you could confirm my comments 🙏

|

@fgalind1 I tried my best to rebase your awesome work onto our main branch. Would you mind testing it? |

Thanks @mumoshu as always for the review - I've address the comments from you and @Fleshgrinder I've tested this flow with the feature on and off and it's working as expected 👍 |

|

@fgalind1 Thanks! We're almost there- the last thing in terms of controller code is the failing TestNewRunnerPodFromRunnerController. Seems like you need to add the new envvar to the testdata |

|

I've update the workflow to just use a hardcoded dockerhub name, it's not a secret anyway @fgalind1 pull and merge master again |

awesome - thanks @toast-gear - after merge master it's working again 👍 |

|

@fgalind1 Hey! This has been always in my head. Sorry for the delay, I do realize this is now 3 months old and I'm very eager to get this merged! I had reviewed this once again this morning and, unfortunately, it turned out that a part of this enhancement (conceptually) conflicts with the new container mode support #1546. The new container mode expects the user to pre-create a service account used by the runner pod, whereas this enhancement dynamically creates a service account for each runner pod(which lacks permissions necessary for the new container mode). Please correct me if I'm saying something wrong. I'm planning to modify this a bit so that (1)the dynamically created service account would also have any permissions required for the new container mode(so that this feature can be used along with the new container mode) and/or (2)this feature can optionally use a pre-created serviceaccount, so that the user can provided either of both sets of permissions required for the new container mode and this feature, to the pre-created serviceaccount. I'm working hard to make it happen earlier, but probably it won't make it before 0.25.0, which I'm planning to cut this weekend if everything went well. So, this might get merged in next or the week after the next. Hope it works. Thanks for your contribution and patience 🙏 |

|

I did my best fixing conflicts in 96d7925. Wishing it works |

config/rbac/role.yaml

Outdated

| metadata: | ||

| creationTimestamp: null | ||

| name: manager-role | ||

| name: {{ include "actions-runner-controller.managerRoleName" . }} |

There was a problem hiding this comment.

This seems messed up. This is a resource loaded by ARC's kustomize config, which has no access to these Helm template expressions

| imagePullSecrets: [] | ||

| nameOverride: "" | ||

| fullnameOverride: "" | ||

| useRunnerStatusUpdateHookEphemeralRole: false |

There was a problem hiding this comment.

I started to wonder if this can be improved after #1546.

One might want to choose if they want ARC to create a service account for the runner pod, and separately enable additional features like the kubernetes container mode and/or this status update hooks.

Considering all the possibles variations of configs, perhaps something like this would be better:

runner:

serviceAccount:

create: true # This will let ARC create a service account for each runner pod

#name: <specify the name of existing SA. Makes sense only when `create: false`

statusUpdateHook:

enabled: true #=> This enables te runner status update hook and adds the role and role binding to either the existing or the dynamically created service account

#Uncomment if you want the kubernetes container mode.

# In case runner.serviceAccount.created=true,runner.statusUpdateHook.enabled=true,containerMode=kubernetes is specified,

# ARC will create a runner service account for each runner pod and adds all the permissions required for both the container mode and the status update hooks

#containerMode: kubernetes

There was a problem hiding this comment.

I believe these are indeed 2 different features but the scope a bit different also.

containerMode is something you want to add to your runners, while status update hook I believe is a more horizonal feature (e.g this PR adds a new column in the runner's table to show the running job, which applies for all runners. In other words I believe it makes more sense to be an on/off feature but to the controller - rather than be a switch per runner. While for the containerMode I do believe that makes sense in the context of the runner. Thoughts?

There was a problem hiding this comment.

Thanks for confirming! You're absolutely correct.

In my previous comment, I made two mistakes.

First, I confused the runner service account vs workflow job pod's service account. This feature affects the former and the new container mode is related to the latter.

Second, my suggested values.yaml snippet did look like containing settings for runners, not for the controller. Configuring runners is out of the scope of the ARC's chart. It might make sense a bit if it was for default runner configs, as we already have some runner defaults related values like image.actionsRunnerRepositoryAndTag. This feature should indeed be a controller-level feature as you pointed out. We might better not conflate the new container mode related settings and this feature under a single umbrella key, at least.

There was a problem hiding this comment.

That said, perhaps it would look ok if we just omitted the containerMode key from my previous example?

runner:

serviceAccount:

create: true # This will let ARC create a service account for each runner pod

statusUpdateHook:

enabled: true #=> This enables the runner status update hook. If serviceaccount.create=true, this also creates the role and bind it to the dynamically created service account

To let ARC use an existing runner pod service account, you'd say:

runner:

serviceAccount:

name: mysa # The name of an existing SA. Valid only when `create: false`

# In this case you don't need to give ARC the permission to create SA at all, which might be preferable to some folks for security reasons.

statusUpdateHook:

enabled: true # This doesn't automatically create a role and rolebinding associated to the existing SA, similarly to the top-level `serviceAccount.name` which his used to specify the existing SA for the controller-manager pod.

Perhaps it might be a good idea to imply runner.serviceAccount.create=true when you set runner.statusUpdateHook.enabled=true:

runner:

statusUpdateHook:

enabled: true #=> This enables the runner status update hook. In order to do so, ARC automatically creates a runner pod serviceaccount and a role with necessary permissions, and binds the role to the SA.

This way, it would look like a controller-wide on/off feature, while providing enough flexibility to let the user decide whether to bring their own SA for runner pods, or not.

WDYT?

There was a problem hiding this comment.

First, I confused the runner service account vs workflow job pod's service account. This feature affects the former and the new container mode is related to the latter.

Sorry, this was again a complete misunderstanding of mine! The new container mode works by giving an existing SA to every runner pod so that it can create workflow job pods from within runner pods. Never mind about this. But I think my suggestion in #1268 (comment) is still valid.

It's just that we won't need to provide the container mode related settings in helm charts as it's a runner-level feature.

There was a problem hiding this comment.

I think my suggestion in this thread can be distilled into just one sentence considering the scope of this pull request. That's "Can we rename useRunnerStatusUpdateHookEphemeralRole to runner.statusUpdateHook.enabled, so that the values will be kept structured great even if we added an ability to let ARC use a pre-created SA as the default runner pod SA in the future?"

In other words, even I mentioned it in my previous examples, we don't need to add support for runner.serviceAccount.create or runner.serviceAccount.name in this PR. That might be out of the scope of this PR.

There was a problem hiding this comment.

sounds good to me - thanks for the detailed analysis :) I've done the rename to runner.statusUpdateHook.enabled in ec02bb7 (#1268)

@mumoshu I've added option #1 here d3e1f96 - let me know what you think That way is consistent - it can let user provide a custom service account (that may be used for kubernetes or this feature) or if it is not provided we create one with the right permissions for kubernetes and/or statuses |

controllers/runner_controller.go

Outdated

| return ctrl.Result{}, err | ||

| } | ||

|

|

||

| needsServiceAccount := runner.Spec.ServiceAccountName != "" && (r.UseRunnerStatusUpdateHookEphemeralRole || runner.Spec.ContainerMode == "kubernetes") |

There was a problem hiding this comment.

I think runner.Spec.ServiceAccountName != "" is an indication that the user wants ARC to use the specified existing service account, whereas this condition results in the opposite. Perhaps we'd better invert it so that it looks like:

| needsServiceAccount := runner.Spec.ServiceAccountName != "" && (r.UseRunnerStatusUpdateHookEphemeralRole || runner.Spec.ContainerMode == "kubernetes") | |

| needsServiceAccount := runner.Spec.ServiceAccountName == "" && (r.UseRunnerStatusUpdateHookEphemeralRole || runner.Spec.ContainerMode == "kubernetes") |

| }...) | ||

| } | ||

|

|

||

| if runner.Spec.ContainerMode == "kubernetes" { |

There was a problem hiding this comment.

Awesome! Thanks for the addition

There was a problem hiding this comment.

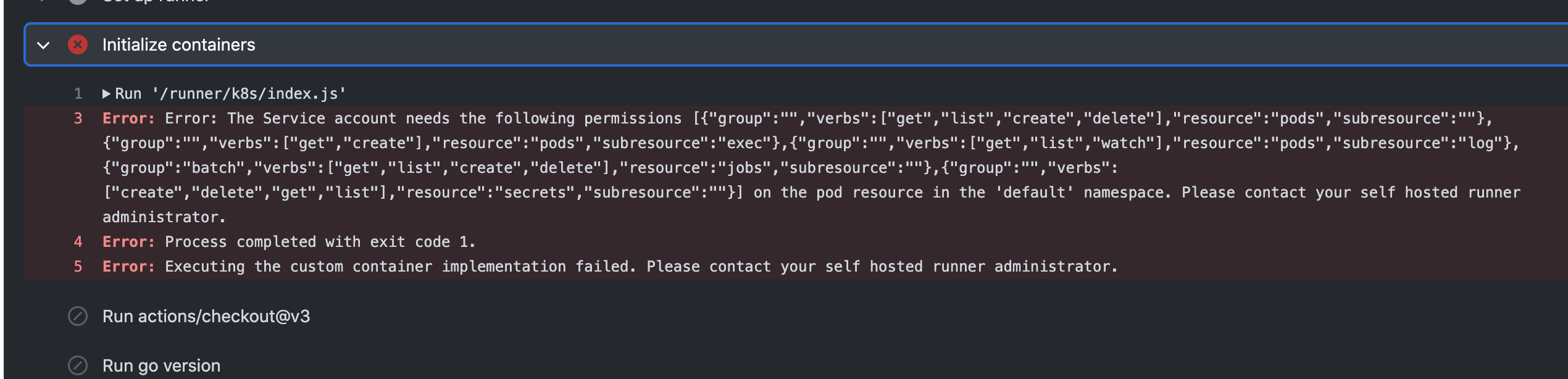

Enabling this resulted in ARC unable to create the role due to an error like:

{"level":"error","ts":"2022-07-10T01:31:04Z","logger":"actions-runner-controller.runner","msg":"Retrying as failed to create Role org-runnerdeploy-ngmsw-pwd5r resource","runner":"default/org-runnerdeploy-ngmsw-pwd5r","error":"roles.rbac.authorization.k8s.io \"org-runnerdeploy-ngmsw-pwd5r\" is forbidden: user \"system:serviceaccount:actions-runner-system:actions-runner-controller\" (groups=[\"system:serviceaccounts\" \"system:serviceaccounts:actions-runner-system\" \"system:authenticated\"]) is attempting to grant RBAC permissions not currently held:\n{APIGroups:[\"\"], Resources:[\"pods/exec\"], Verbs:[\"get\" \"create\"]}\n{APIGroups:[\"\"], Resources:[\"pods/log\"], Verbs:[\"get\" \"list\" \"watch\"]}\n{APIGroups:[\"\"], Resources:[\"secrets\"], Verbs:[\"create\" \"delete\"]}","stacktrace":"github.com/actions-runner-controller/actions-runner-controller/controllers.(*RunnerReconciler).processRunnerCreation\n\t/workspace/controllers/runner_controller.go:322\ngithub.com/actions-runner-controller/actions-runner-controller/controllers.(*RunnerReconciler).Reconcile\n\t/workspace/controllers/runner_controller.go:133\nsigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Reconcile\n\t/go/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.2/pkg/internal/controller/controller.go:121\nsigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).reconcileHandler\n\t/go/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.2/pkg/internal/controller/controller.go:320\nsigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).processNextWorkItem\n\t/go/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.2/pkg/internal/controller/controller.go:273\nsigs.k8s.io/controller-runtime/pkg/internal/controller.(*Controller).Start.func2.2\n\t/go/pkg/mod/sigs.k8s.io/controller-runtime@v0.12.2/pkg/internal/controller/controller.go:234"}

As the error indicates, this is probably due to ARC's controller-manager role lacks permissions for pods/exec and pods/log, and create/delete of secrets. Without the permissions, Kubernetes seems to think ARC is trying to do a privilege escalation (from ARC controller-manager's lesser permission set, to the runner pod's greater permission set), which is apparently forbidden by Kubernetes.

There was a problem hiding this comment.

Probably we'd need another chart value flag to make this privilege escalation possible, and it should be orthogonal to statusUpdateHook 🤔

|

I'm currently testing this on top the current main branch and hopefully merge this soon as long as I don't see any major issues. Thanks for your patience! |

| APIGroups: []string{""}, | ||

| Resources: []string{"secrets"}, | ||

| Verbs: []string{"get", "list", "create", "delete"}, | ||

| }, |

There was a problem hiding this comment.

After fixing two minor issues I found (https://github.com/actions-runner-controller/actions-runner-controller/pull/1268/files#r912319021 and https://github.com/actions-runner-controller/actions-runner-controller/pull/1268/files#r917331632), it worked with the "kubernetes" container mode, too 🎉 I'll submit a follow-up PR or two for those issues.

One last thing to note- the hook updates the runner status to e.g. Registering and Idle before/after the registration. It's great. But I suspect it might have a race condition with the runner controller's logic that syncs the runner pod status to the runner resource:

If the runner controller's resync period passed and hence it reconciled the runner resource immediately after the hook update the runner status to Idle, the runner status might be "corrected" to Running(=pod status phase). So it can look a bit flappy if you watched the runner status phase transitions continuously (by e.g. running kubectl get runner -w and triggering a bunch of workflow runs). It might not be a big deal, but might be worth a fix.

I think I'm confident this works almost perfectly, and we know what the remaining issues are and how to address those. That said, I consider this is now good to be merged!

Thanks again for your patience and contribution @fgalind1 😄

Lovely! thanks also for your help @mumoshu |

This is a new optional feature that allows to set the

.status.phaseof a Runner to a more specific/accurate phase based on the status of the runner. This leverages the recent runner feature actions/runner#1737 that allows to set hooks on job start and job end. The end goal is to make it easier for admins or however manages runners via ARC to easily see what each runner is doinge.g Created, Registering, Idle, Running, Idle, Running...entrypoint.shwill update the runner status to Registering and Idle as it goes trough the runner registration. Runner will set the status to Running (along with the run number and repo using that runner at that moment) on job start via the runner hook and runner back to idle on job endIn order to do so - this requires the Runner pod to be able to update its own

.statusby itself; thus, this PR makes sure that whenever we're creating a new runner, we create a custom role, service account and role binding that has access only to update the runner/status of his own.All these things to make this work (RBAC and the runner status) can be optionally enabled with the chart option

useCustomRunnersRBAC. By default is disabled and this should have no impact in the current behavior. At some point this may be defaulted to true or just remove the old behavior but this was implemented this way to have it as an opt-in featureExample of how it looks like: